Designing a context-aware system that acts on models, metadata, and workflows — embedded directly where engineers and analysts work.

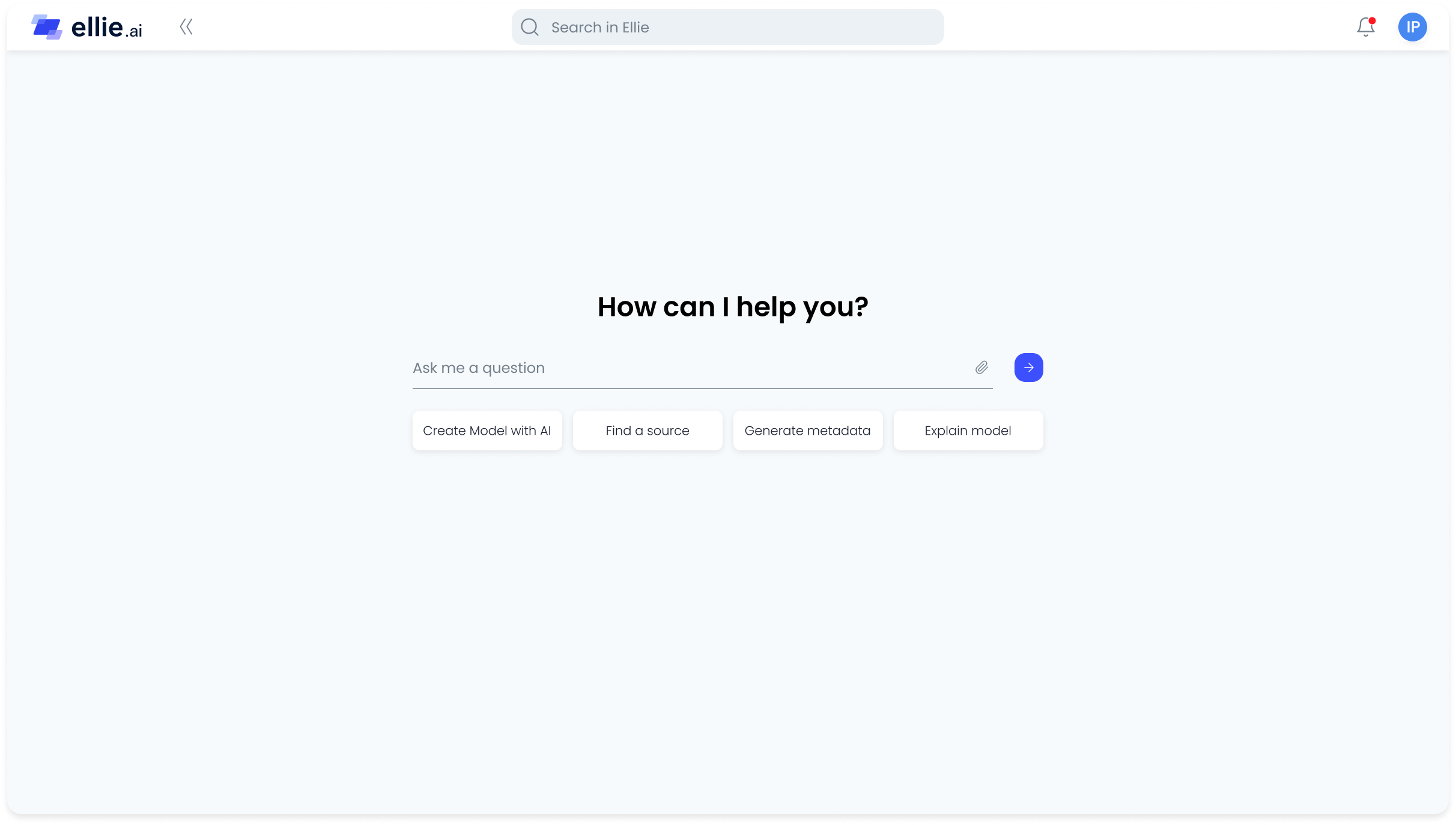

The rise of generative AI created an opportunity to improve how enterprise data modeling workflows operate. Rather than launching a standalone chatbot, we embedded AI directly into core product workflows — helping users move from problem to resolved faster, explore models with greater confidence, and make complex source systems easier to understand and document.

Instead of a separate AI interface, the goal was workflow acceleration grounded in context and trust — AI that knows what you're working on, acts within it, and explains its reasoning.

Enterprise data modeling is rarely blocked by the act of drawing entities. It is blocked by context — specifically, the lack of it. Across industry conversations, analyst forums, and customer interviews, the same themes surfaced repeatedly: getting started is difficult, understanding complex source schemas is overwhelming, and a significant portion of time is spent on repetitive metadata and documentation work.

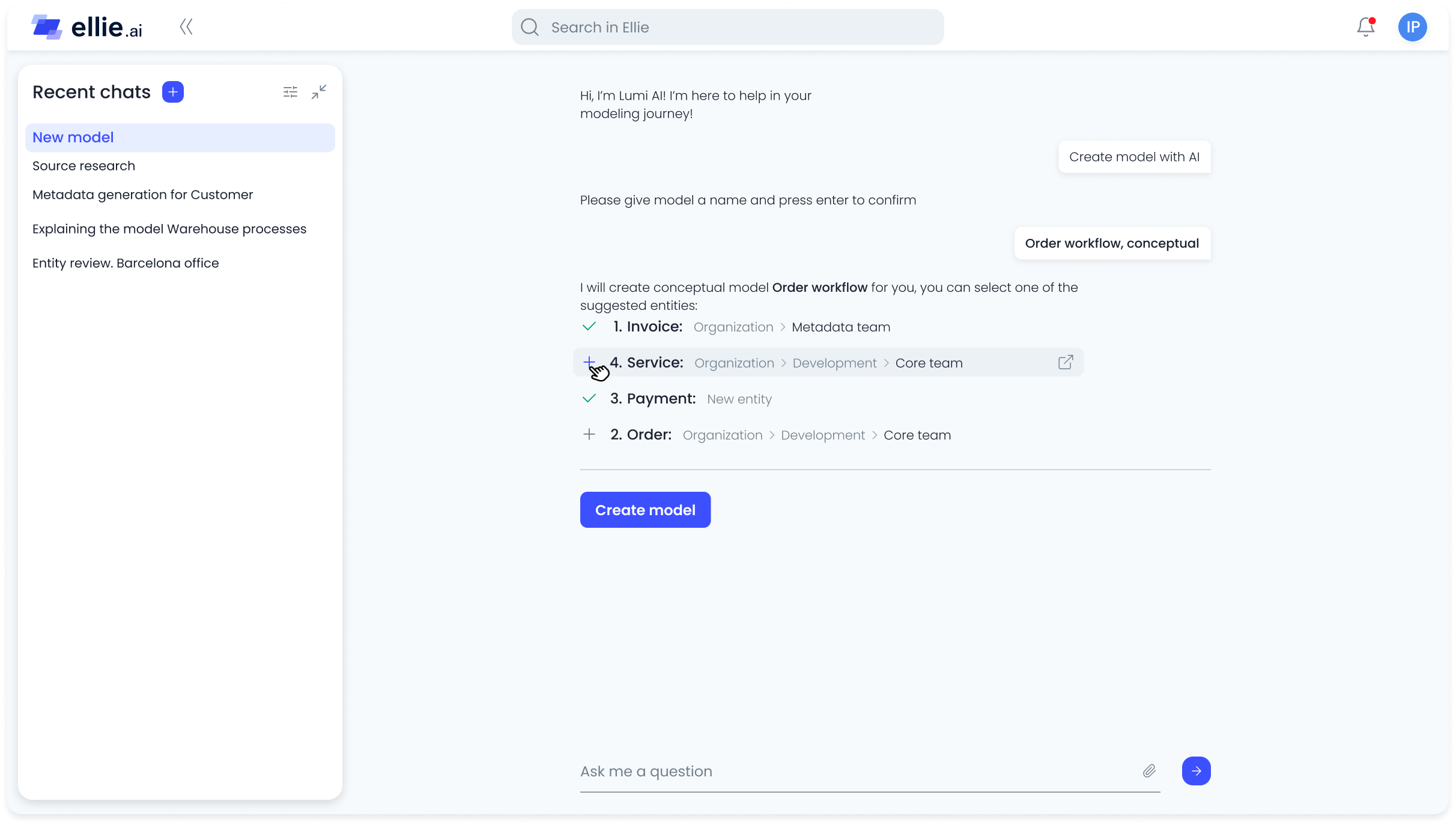

Another critical barrier was trust. AI could accelerate workflows — but only if users felt confident enough not to double-check everything manually from scratch. Any AI-generated output had to be editable, explainable, and controllable.

I led the design initiative in collaboration with AI engineers, product managers, and core platform teams. The scope covered every AI-powered workflow in the product — from the first draft model generator to the conversational agent layer.

I defined AI-supported interaction patterns across modeling and discovery surfaces, designed the trust and transparency layer, and ensured the experience remained cohesive across the platform — regardless of which AI feature a user was interacting with.

Rather than a standalone AI interface, we integrated intelligence directly into the surfaces where users were already working — the canvas, the source explorer, the documentation panel. Each AI feature was designed to reduce a specific cognitive load, not to be impressive for its own sake.

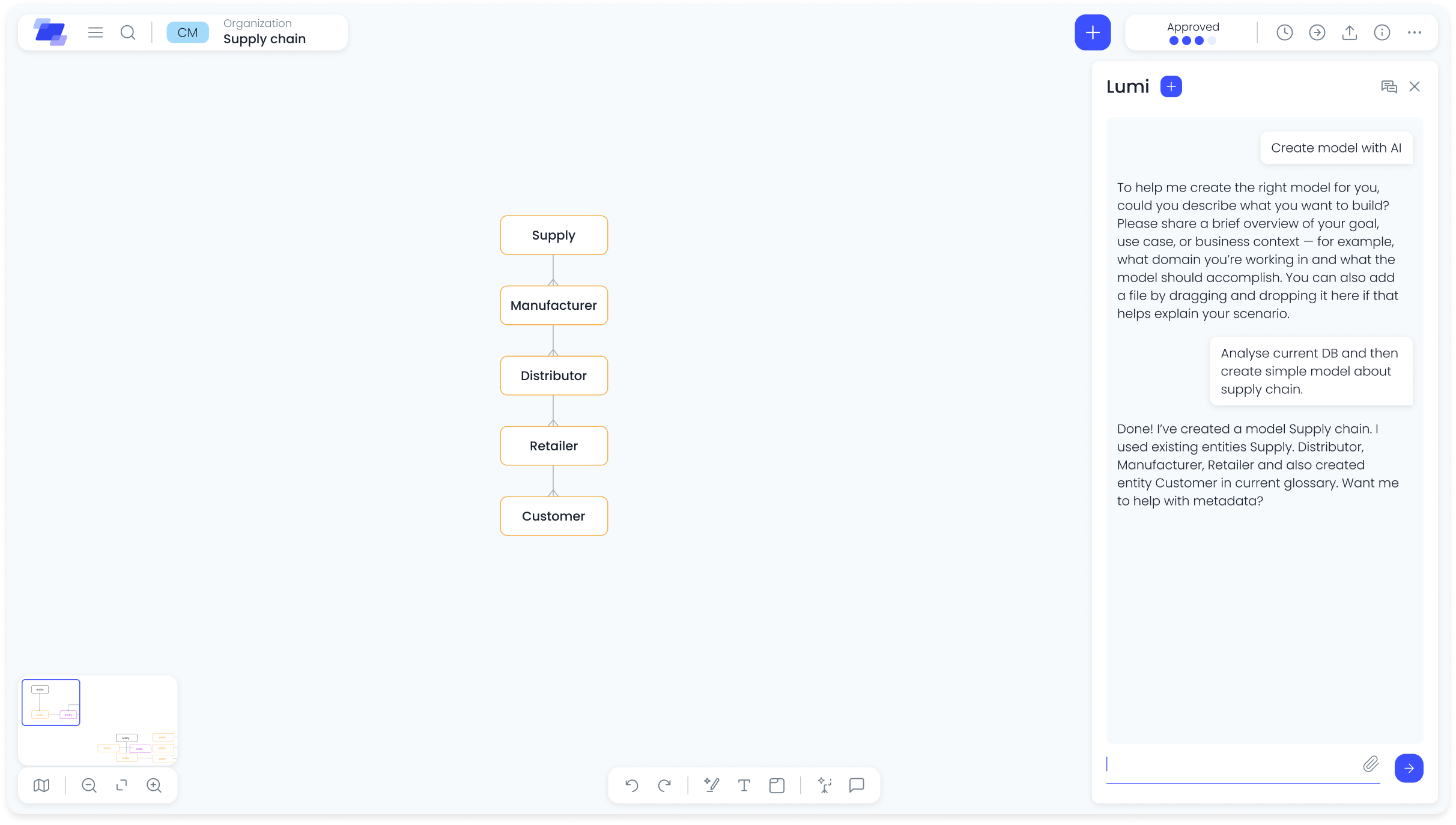

Users paste or describe business context and receive a structured conceptual model as a starting point. Eliminates the blank-canvas barrier and reduces time-to-first-draft from hours to minutes.

AI supports refinement directly within the live model — suggesting relationships, flagging structural inconsistencies, and offering stakeholder-friendly explanations of technical decisions without leaving the canvas.

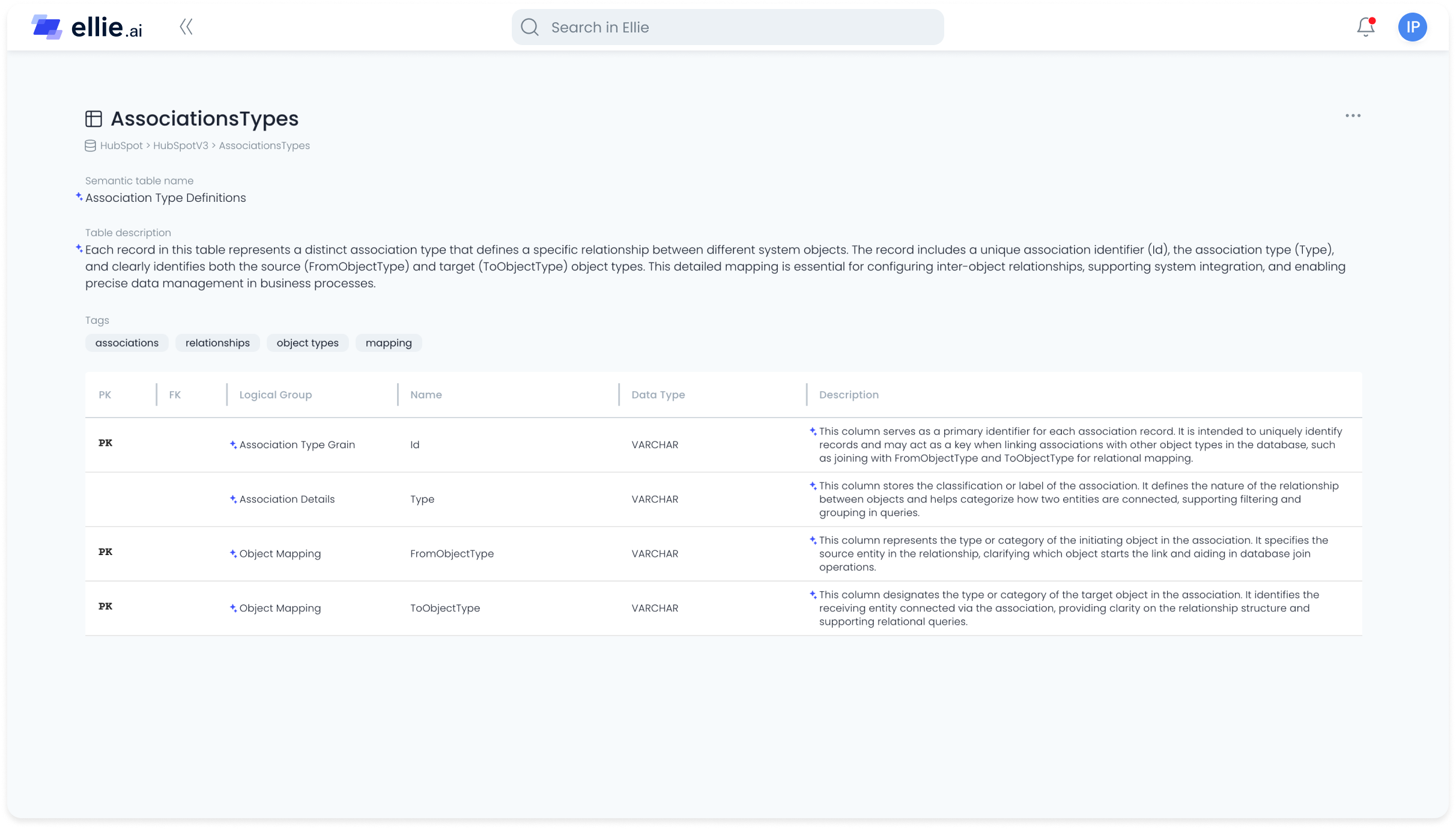

Generates semantic metadata and clarifies technical schemas using sample data or metadata-only modes. Turns opaque source tables into documented, understandable assets — without requiring manual annotation.

Lumi works directly with models and source assets — creating entities, editing relationships, suggesting structures, and generating documentation based on full system context. It operates within the model, not just alongside it.

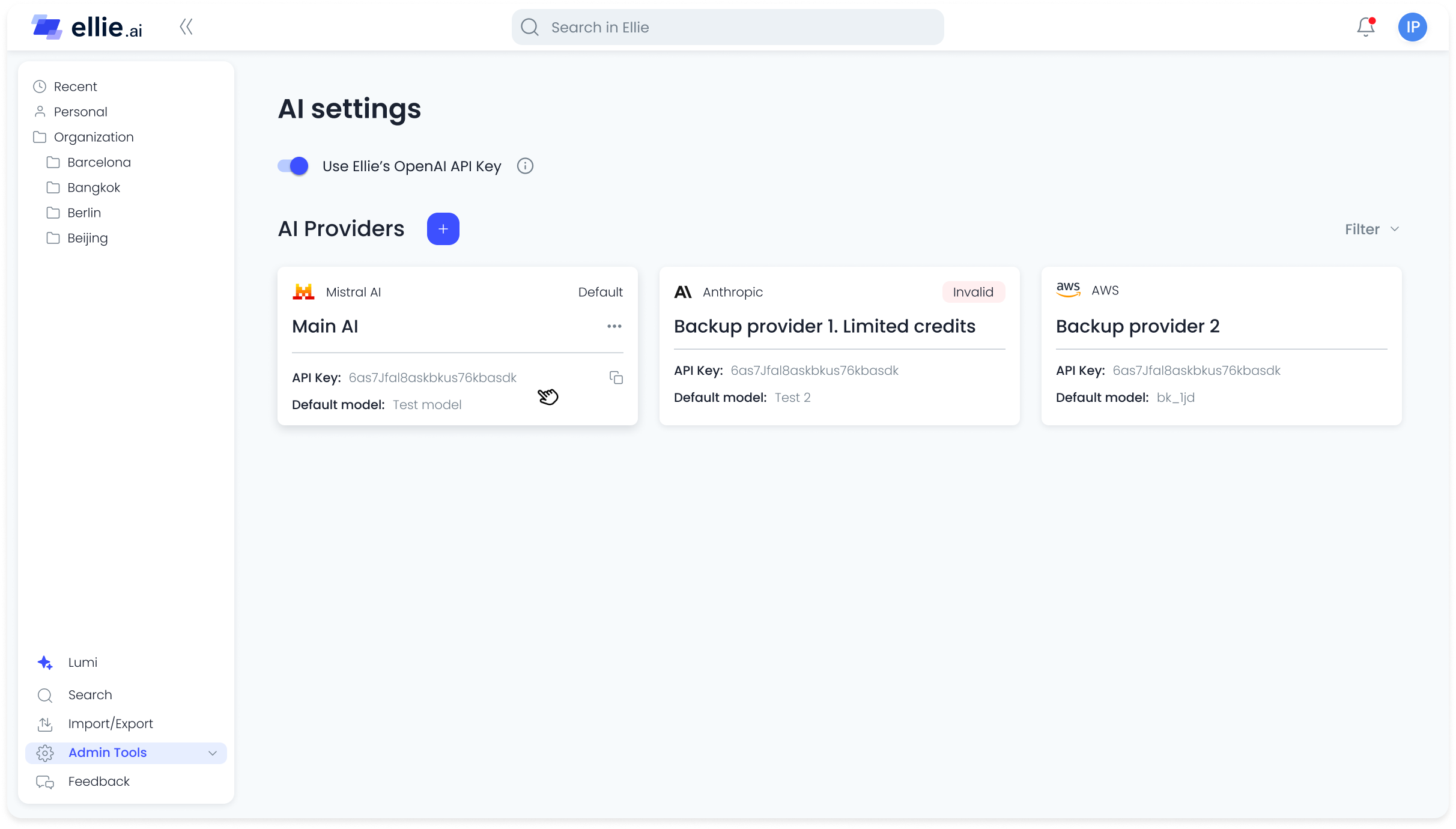

BYOK (Bring Your Own Key) configuration and strict data boundary enforcement ensured enterprise-grade privacy. Organizations could connect their own AI providers and define exactly what data was exposed — making adoption viable even in regulated industries.

Results are based on internal usage data, time-tracking analysis, and user satisfaction surveys.